When Building World-Class AI for Autonomous Vehicles, a Partner Like Appen Gets Your Team There Faster, With Data You Can Rely On

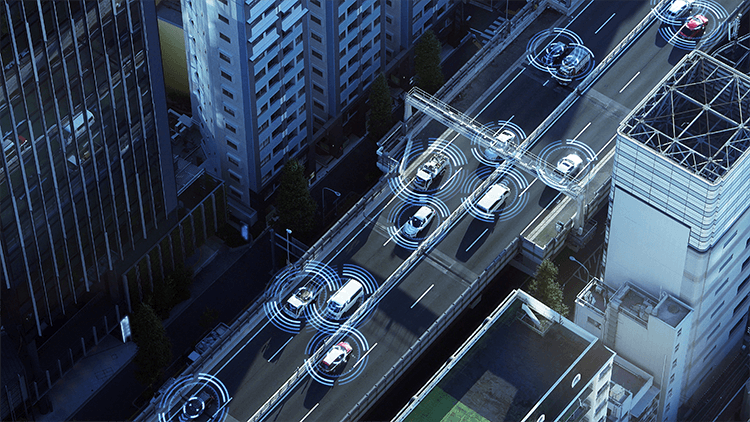

There is an enormous business opportunity with the rise of autonomous vehicles and the connected car. Already, we have seen significant investment in self-driving technology and, with everyone racing to reap benefits including fewer road accidents, Mobility-as-a-Service (MaaS), traffic reduction and improved logistics and haulage services. The auto industry is undergoing a profound transformation, with AI and electric changing the way companies build cars and influencing the type of customer that will ultimately buy or use those cars. The smart car that works for everyone in the future will be built with world-class AI, ultra-fast connectivity and environmental impact in mind.Challenges with Today’s Building Blocks of Self-driving

Companies are heavily investing into self-driving technology and the future of the connected car. Teams that are busy building a fully autonomous vehicle, improving driver assistance features, or any solution in-between, often have to work with multiple vendors and applications to collect and label, prepare, and converge all data in order to effectively train their AI models. Building the future of transportation is complicated enough without having to connect several dozen different data pipeline components and integrate dozens of APIs. In order for a car to “see,” “hear,” “understand,” “talk,” and “think,” it needs video, image, audio, text, and LIDAR and sensor data to be correctly collected, structured and understood by its machine learning models. In the case of autonomous vehicles, like with healthcare or other use cases that might risk human lives, training data needs to be annotated and verified by humans at scale, so machines deliver 100% accuracy every time. Combine that with the fact that cars not only need to abide by strict national and regional regulations, but also have to understand hundreds of languages and dialects, and it becomes an exponential challenge. Putting building blocks together shouldn’t be this difficult.The Single-source Approach to Multi-modal AI Needed for Smart Cars that Work for Everyone

Teams that choose to work with proven, reliable, global partners to address their global multi-modal AI needs for self-driving cars will likely get there faster. Just imagine how much time it takes to run and combine multiple collection and annotation jobs. Think now about doing this in 130 countries, 180+ languages, and dialects, each with its own data sets. This process is prone to latency, manual error and it ends up being very expensive, quickly.

Using a single source for your data can help reduce risks by automating multi-step projects, breaking complex tasks down into simple jobs and routing them in a flexible process, while coordinating and working in a single pipeline. The operational aspect of building and deploying world-class is significantly reduced.

Imagine being able to do 2D, 3D and audio annotations all in one process stream for multi-modal computer vision systems for self-driving vehicles, and be able to tap into cutting-edge ML-powered tools for fast 3D point cloud labeling (LiDAR, Radar), video object and event tracking for object understanding, and pixel-level 2D labeling, including semantic segmentation.

With automotive AI, technology leaders must work to reduce complexity. Niche vendors work fine until you have to automate and integrate different systems and data types, creating bottlenecks and data compatibility issues. Building your own systems can create different problems, too – now not only are you working on deploying your AI, but you have to allocate resources to build, improve and maintain non-core pieces of software.

That can all go away if you choose to use the best tools and partners to achieve your goals. After all, your teams should be focused on model building and training, not on data collection and preparation, or maintaining some custom software you had to build

Teams that choose to work with proven, reliable, global partners to address their global multi-modal AI needs for self-driving cars will likely get there faster. Just imagine how much time it takes to run and combine multiple collection and annotation jobs. Think now about doing this in 130 countries, 180+ languages, and dialects, each with its own data sets. This process is prone to latency, manual error and it ends up being very expensive, quickly.

Using a single source for your data can help reduce risks by automating multi-step projects, breaking complex tasks down into simple jobs and routing them in a flexible process, while coordinating and working in a single pipeline. The operational aspect of building and deploying world-class is significantly reduced.

Imagine being able to do 2D, 3D and audio annotations all in one process stream for multi-modal computer vision systems for self-driving vehicles, and be able to tap into cutting-edge ML-powered tools for fast 3D point cloud labeling (LiDAR, Radar), video object and event tracking for object understanding, and pixel-level 2D labeling, including semantic segmentation.

With automotive AI, technology leaders must work to reduce complexity. Niche vendors work fine until you have to automate and integrate different systems and data types, creating bottlenecks and data compatibility issues. Building your own systems can create different problems, too – now not only are you working on deploying your AI, but you have to allocate resources to build, improve and maintain non-core pieces of software.

That can all go away if you choose to use the best tools and partners to achieve your goals. After all, your teams should be focused on model building and training, not on data collection and preparation, or maintaining some custom software you had to build

Cars that Work for Everyone

When you build smart cars that work for everyone, they need to do so in every market. Leaders building self-driving cars are thinking beyond simple efficiencies, speed, and cost. We need to make sure we remove bias from the data so that the AI recognizes everything and everyone equally. As a top OEM or Tier 1 automotive supplier, you want your customers to be safe and understood by the cars they are using, no matter their ethnicity, gender, age or geography. What’s more, leaders must consider their supply chain impact. An ethical-first approach to data that goes into unbiased AI, creates a positive global impact and offsets some of the disruption Level 5 self-driving will bring to the world. At Appen, we bring our 25 years of training data experience and quality consistency to help accelerate self-driving capabilities. We have the annotation tools, including, ML-assisted LiDAR, Video, Events, and Pixel level labeling, speech and natural language, interconnected with Workflows will deliver higher productivity to a market racing to name a winner Our Difference:- Our 1M multi-lingual Crowd and ability to scale

- On-prem solutions, end-to-end managed services

- Workflows for multistep labeling for multimodal requirements and complex labeling tasks

- Ethical AI – GDPR, CCPA, Fair Pay pledge, diversity and inclusion at the core of our AI training data practice